What You Should Be Aware Of When It Comes To Reinventing SEO Processes

- 12 May, 2020

- Jason Ferry

- SEO experts

Google’s search develor advocate, Martin Splitt shared some tips on how to prevent indexing and JavaScript mistakes. He also warned developers and SEO experts about recreating SEO processes with technical workarounds. He stated on the crawling and indexing session of Live with Search Engine Land, “You might shoot yourself in the foot when you don’t expect it, so why would you build something more brittle if all it does is solve a non-problem?”

Splitt further explained why he and other Googlers don’t answer every query from everyone and why Google is not as communcative as they want it to be.

Googlers not addressing all questions a good thing?

Splitt stated, “[People say,] ‘Oh, but you as a Googler can’t talk about this,’ — I can pretty much talk about nearly everything, it’s just often unwise to do so,” He further clarified that their company is a big agency that operates in various countries and that not every staff knows what is going on.

“For instance, ranking for me,” Splitt utilised as example, “I am not involved in ranking; I can look things up if I have to, but I really don’t want to because it’s not my domain and when people ask me about ranking I don’t say anything — not because I am bound to not say anything about ranking, but because I just don’t know.”

Whether it’s about the ranking algorithms or the SERPs layout, every update that Google performs has huge repercussion for users and businesses. Google employees addressing concerns that they don’t have an extensive knowledge of could just cause confusion that may result to SEOs suggesting strategies that don’t actually work or can even negatively impact their rankings.

Communication Problems Of Google

Splitt is part of the Search Relations team, which is the link between Google’s internal teams and the SEO community. Their task is to manage all those communication promptly and accurately and it is definitely challenging.

Splitt stated, “While most engineering teams work with us quite closely and are receptive for both feedback as well as giving us a heads up before they do things, sometimes some teams are a little less inclined to do that and then they just make changes and we find out randomly at some point when the change has already happened — that’s the rel=prev/next situation,” This specific statement caused disappointment since the update took place years prior.

Splitt further explained, “We should have proactively communicated this change to happen, even though [that team] made the decision because they thought nothing externally changes,”

“But that’s not quite the reality because, as we know, [SEOs] advise clients and customers to make a change that costs them money — that wasn’t exactly fantastic and we were in the middle of this entire thing; a very unfortunate position.”

Earlier in the year, a similar confusion happened when Google retracted some of its guidance concerning keywords within GMB descriptions. After the shock that occurs directly after such mistakes there tends to be an increase in transparency and dialogue between the SEO community and Google. Hopefully resulting in less messy communications.

Indexing and Javascript Mistakes

Addressing the unnecessary complex SEO processes that some SEOs and develops choose to perform, Splitt stated, “These are things that worry me a lot and, oftentimes, it is either very over eagerly excited developers or SEOs who understand enough of the technology to be dangerous with it,”

Crawling problems can happen. Splitt explained the issues on both external and internal links, “We are still seeing websites not linking properly,”

“There is an HTML link tag, you put a URL in the href, that’s how you link and I don’t know why people are reinventing the wheel.” According to Splitt, such over-engineered solutions might work sometimes, yet they can also be ineffective in particular instances, and that will typically involve crawlers.

Splitt also stated, “My favorite question is ‘Can we, for Googlebot, not serve the CSS to save some bandwidth?’ and I’m like, ‘The straightforward answer to this question is yes, you can,’”

He warned that, “the real answer is no, you should not, because that means that you’re building in complexity to solve something that isn’t a problem in the first place.” Such tactics can badly impact the visibility of a website in unexpected ways, which may lead to wasted resources during execution and the extra time rendered on solving the problem.

Avoid Javascript if there an easier method. Splitt also explained that “brittle” technical workarounds and lack of knowledge are two causes of Javascript problems, “For instance, robots.txt is a classical thing where [SEOs and webmasters] are like, ‘I don’t know, Google has dropped my site from the index,’ and then you check and then it’s like, ‘Yeah, your robots.txt says that we shouldn’t take this URL,” He added that Google when the robots.txt file of a website blocks Google, the content won’t be seen no matter how the JavaScript API is implemented.

Splitt added, “We do see people breaking websites for users rather than for search engines,”

“So, it is indexable, we do rank it, but it is terrible if you open it on a normal website because [you] are shipping like five megabytes of JavaScript for a very simple scrolling list of products.”

For years, Google has always recommended creating websites for users, and not for search engines. Most importantly, a website with good rankings is not that beneficial if the users wait so long the site to load that they bounce in the end.

Highlighting his recommendation about going for effective and simple techniques instead of over-engineered SEO processes that might cost a lot of money in the long term, Splitt said, "Another thing that I see relatively often is that people rely on JavaScript to do things that you can do without JavaScript — that's not something that you need to inherently be careful about, it's just something that I think is pointless".

This Is Why It Is A Great Thing To Be In The First Page

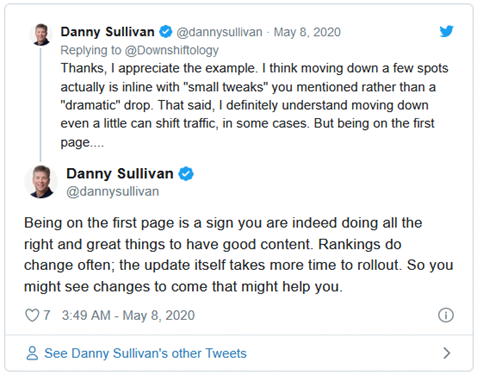

In Twitter, Google’s Danny Sullivan confirmed that you are doing the right things if you see that your pages are ranking on the first page Google for relevant searcher queries. He specifically stated, “being on the first page is a sign you are indeed doing all the right and great things to have good content".

Sullivan further mentioned that things can get worse or get better since rankings change. Probably talking about the May 2020 Core Update, he stated "rankings do change often; the update itself takes more time to rollout,"

Sullivan added, "So you might see changes to come that might help you,"

These are the tweets by Danny Sullivan:

This further suggests that for SEO UK professionals or site owners who are doing things the right way, it’s necessary to anticipate Google updates as these can be beneficial to them. So for those whose pages didn’t rank on the first page Google, then there might be something wrong in what they’re doing.

Details on this post originally appeared on https://searchengineland.com/dont-try-to-reinvent-the-seo-wheel-says-googles-martin-splitt-334560?utm_source=feedburner&utm_medium=feed&utm_campaign=feed-main and https://www.seroundtable.com/google-first-page-doing-things-right-29431.html.

Achieving and maintain good site rankings is tough if you don’t have the relevant expertise. This is why working with professional SEO companies is your best shot. Head over to Position1SEO homepage to learn how out team can help you.