This Is How You Should Optimise Your Crawl Budget

- 18 February, 2020

- Jason Ferry

- SEO experts

There’s no benchmark for what SEO experts and webmasters can consider as the optimal crawl budget for sites, according to John Mueller of Google.

This was stated in reaction to a thread in Reddit. Here, someone asked if there is an ideal percentage of pages when it comes to Googlebot’s daily crawl. This way, the content may be kept fresh in case Googlebot crawls it much more frequently.

Here's the full question:

“While everyone talks about crawl budget, I haven’t found a specific cutoff or a range for this. Like what should be the minimum percentage of pages out of total (or total changes made everyday) should be crawled by GoogleBot everyday to keep the content fresh?

I understand that this can vary a lot because lot depends upon static/variable content on the website. I am just trying to understand how to benchmark crawl budget.”

In response, Mueller just states: "There’s no number."

Consequently, in case you are planning to increase your crawl budget, there is really no best number to aim for. Nevertheless, optimising a website's crawl budget certainly has its advantages.

What’s a crawl budget?

To put it simply, a crawl budget will be the number of URLs that Googlebot can crawl (based on website speed) and would like to crawl (based on user demand).

A higher crawl budget helps in maintaining the popularity of fresh content. In addition, older information will be prevented from getting stagnant.

Factors that impact crawl budget

One of the greatest methods of improving the crawl budget is limiting the quantity of low-value-add URLs on a site, such as:

• Session identifiers and faceted navigation

• Infinite spaces and proxies

• On-site duplicate content

• Hacked pages

• Soft error pages

• Spam and low-quality content

These kinds of pages can put crawl activity to the less important pages of a website.

It is also recommended for SEO companies and website owners to refer to the crawl errors report in Google Search Console and keeping the server errors to a minimum.

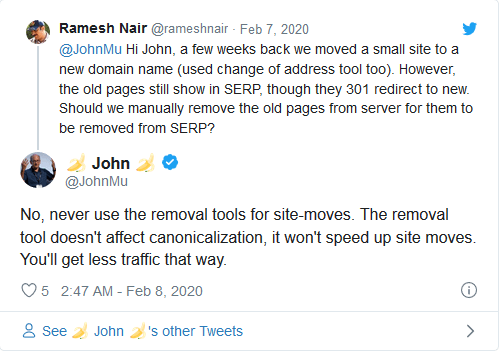

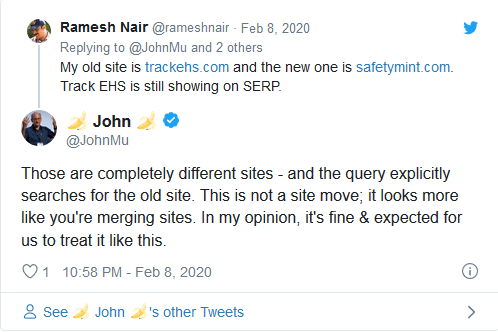

This Is Why Site Mergers Require More Time To Be Removed From Google Search Than Site Moves

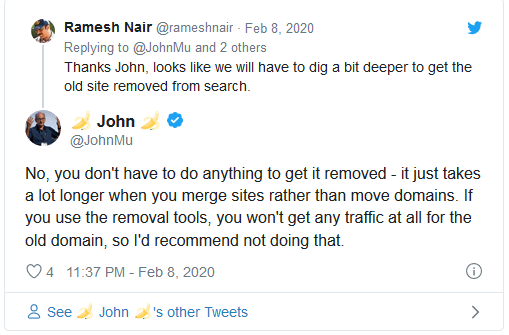

According to John Mueller of Google, if you merge two sites together, the domain name you're eliminating and not using any longer requires time to disappear in Google's search results. When you perform a website move, like switching to a new domain with identical content, URLs, and more then that's a lot quicker.

John Mueller posted on Twitter: "No, you don't have to do anything to get it removed - it just takes a lot longer when you merge sites rather than move domains. If you use the removal tools, you won't get any traffic at all for the old domain, so I'd recommend not doing that."

Here are several of the pertinent tweets:

All detail of this post were gathered from https://www.searchenginejournal.com/googles-john-mueller-there-is-no-benchmark-for-crawl-budget/348382/ and https://www.searchenginejournal.com/site-migrations-traffic/279953/. To learn more, click the links.

By availing of the best SEO marketing services, you can easily improve your website ranking and performance. Here at Position1SEO, we can help you turn this into reality. Visit our homepage right now.