What You Should Know About The Index Coverage Report Of Search Console

- 25 March, 2020

- Jason Ferry

- SEO experts

Google posted a training video on how to use the index coverage report of Search Console, which can be a useful reference for SEO experts and webmasters.

Daniel Waisberg of Google discusses how to use the Search Console to discover which web pages have been crawled and indexed by Google, as well as how to approach any issues found during that process.

First, the video provides an overview of the numerous components of the index coverage report and the right way to read the information included in them.

Index Coverage Report: What are its Contents?

The index coverage report of Search Console gives a thorough look at all webpages that have been indexed or tried to index by Google. In addition, the report records all errors Googlebot came across when crawling a page.

The index coverage report is comprised of these components:

- Errors: They are crucial problems that stop pages from getting indexed. Errors could include webpages with a server error, webpages that have the ‘noindex’ directive, and webpages with a 404 error.

- Valid: These are typically listed pages which can be qualified to be served in search results.

- Valid with warnings: This includes webpages that may or may not appear in search results depending on the problem. One example is an indexed page being blocked by robots.txt.

- Excluded: These are typically webpages which are intentionally not indexed and won’t be included in search results.

On the index coverage report’s summary page, you will also notice a checkbox you can click to see impressions for indexed webpages in Google search.

How to Use the Index Coverage Report

It’s advisable that website owners and SEO companies start by checking the chart on the summary page to find out if the valid webpages trend is somewhat steady. It is normal to see some amount of fluctuations here. If you know that content is being published or removed, you will see that in the report too.

Then, start looking at the different error sections. You can easily identify the most urgent problems since they’re categorised according to severity. Start at the beginning of the list and all the way down.

After knowing what needs your attention, you can either address the problems yourself, if you have no problem doing so, or discuss the details with a developer who can make code changes to your website.

After the issue has been addressed, you can click “Validate Fix” and Google will validate the modifications.

When To Check The Index Coverage Report

According to Google, It’s not required to check the index coverage report every day because emails will be sent out whenever Google Search Console discovers a new indexing error.

But if an existing error worsens, Google will not be sending out an email notification. That’s why it’s still a good idea to browse the index coverage report at least once in a while to ensure that nothing will get out of your control.

Top 3 Things You Can Do To Solve Crawl Errors

No one loves crawl errors. They show up without warning and may lead to indexing problems.

In a Reddit AMA last year by Gary Illyes, Google Webmaster Trends Analyst, he discussed why you have to make the website crawlable:

“I really wish SEOs went back to the basics (i.e. MAKE THAT DAMN SITE CRAWLABLE) instead of focusing on silly updates and made up terms by the rank trackers, and that they talked more with developers…”

These tips will teach you how.

Finding and Fixing Index Bloat

Index bloat means you have more URLs indexed than physical webpages.

On a large scale, it can have a negative impact on your performance. If it is too severe, then your crawl budget might be wasted.

Use the site: operator (without space) in Google search to locate it. If the number of results is bigger than the URLs you are using, it’s a problem.

This is what the operator should enter in Google:

Misconfigured 4xx Problems and Soft 404s

With normal 404s, 301 redirecting them to working URLs is a great solution. But what if 404s are not the normal 404s?

This is a frequent issue. A page without content can be a soft 404, even if it shows a 200 OK status.

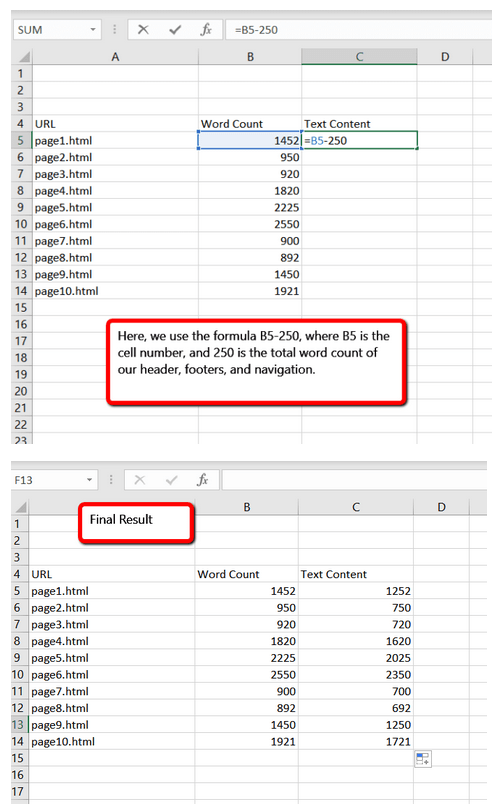

In Screaming Frog, default word count reflects each word on the webpage and not only the main content area. You should use Excel to see “no content” after exporting your crawl data.

Create a column in Excel next to the standard word count of Screaming Frog and deduct the total word count of your own headers and footers (sidebars, other text, and others) from the displayed total word count.

The next method is a lot more reliable but may consume more time to manually examine your webpages to find physical text content.

Misconfigured trailing slashes

Not all URLs are the same. There is a difference between .htm, using a forward slash (/), and .html. A forward slash is a folder, while .html and .htm are file names.

When all load at the same time, you’re serving three URLs using the same content.

Servicing a number of indexable versions results in crawl errors and duplicate content problems.

If this problem is already present on your website, redirect all URL versions to a single primary edition. This way, only one version loads.

Leaner is better

Don’t just focus more on content. You should care about these details too. They are important to your website. Create a better, leaner website with fully optimised crawlability. This is what users really want.

All details of this post were gathered from https://www.searchenginejournal.com/google-explains-how-to-use-the-search-consoles-index-coverage-report/356676/ and https://searchengineland.com/pro-tip-how-to-fix-3-not-so-obvious-crawl-errors-331226. Check out these links to learn more.

If you are having problems with your rankings, conversion, and overall performance of your website, finding the best provider of SEO services is key. Discover our available packages right now by visiting Position1SEO.